Google BigQuery connector for pivot tables, charts

Google BigQuery is supported by SQL data source via official BigQuery ODBC driver.

SeekTable democratizes data access by offering a user-friendly visual interface for non-technical users to explore BigQuery data, at the same time allowing IT team to use all power of SQL for configuring BigQuery-based data cubes.

- BigQuery is used as a live data source: no need to refresh reports as users always get actual data (direct query mode).

- Reports may be shared with a team or published for embedding (or public link sharing). Users can subscribe to reports in a self-service manner.

- The cube itself may be shared too: users can create own reports without having direct access to BigQuery API.

- Web API can be used for automated reports generation (export to Excel, multipage PDFs).

This youtube video illustrates how sample 1.3Gb data is aggregated by BigQuery in seconds and displayed as a pivot table in SeekTable.

How to configure BigQuery cube

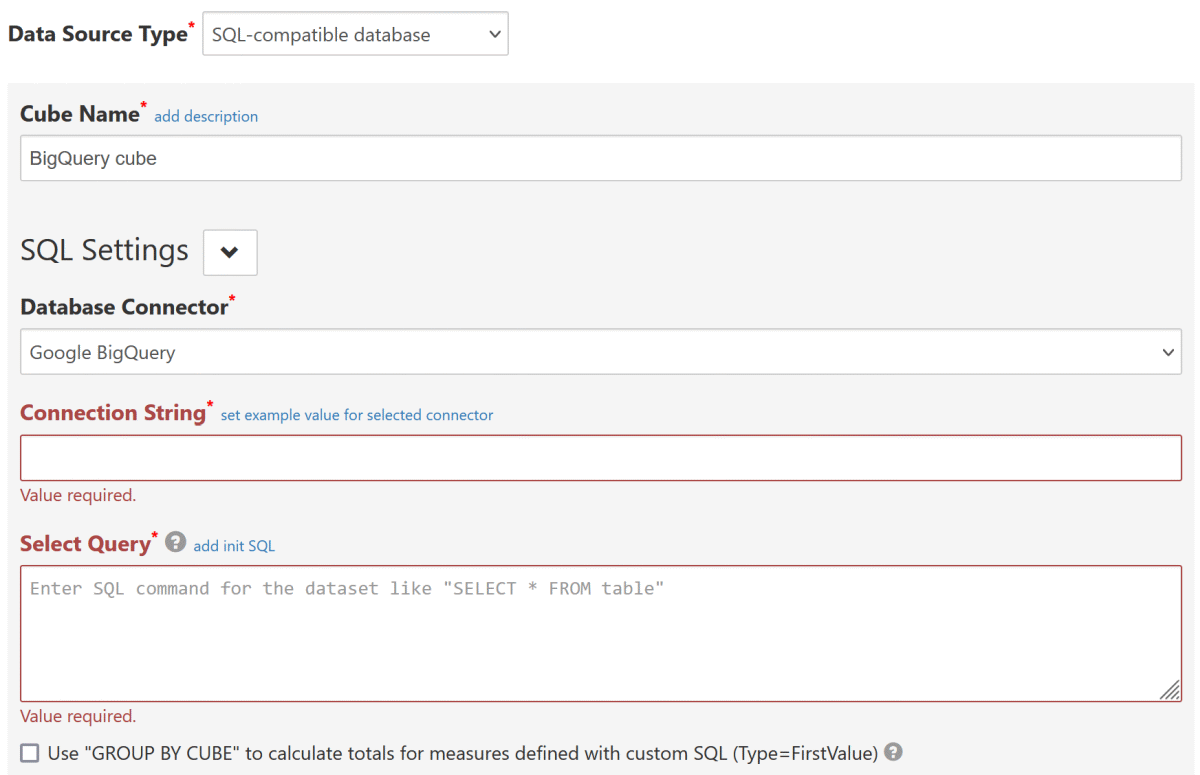

- Click the "Connect to Database" to open a new data cube configuration form.

-

Choose Data Source Type:

SQL-compatible database(this opens SQL Settings section). -

Choose Database Connector:

Google BigQuery

-

Connection String should include settings that are supported by an official BigQuery ODBC driver:

ClientId=oauth_client_id;ClientSecret=oauth_client_secret;RefreshToken=your_google_oauth_refresh_token;Catalog=your_api_project_id;

Catalog The name of your BigQuery project. This project is the default project that the Simba ODBC Driver for Google BigQuery queries against. RefreshToken The refresh token that you obtain from Google for authorizing access to BigQuery. Section below explains how to generate the token. ClientId OAuth Client ID used for refresh token creation (may be omitted if refresh token was generated with ODBC driver's get_refresh_token.pyscript).ClientSecret Corresponding OAuth Client secret (may be omitted if refresh token was generated with ODBC driver's get_refresh_token.pyscript). - Configure other required settings as described in SQL-compatible data source. As a minimum you need to specify Select Query (which determine's the dataset) and keep Infer dimensions and measures by dataset checked.

How to get a refresh token

If you don't have a refresh token provided by your BigQuery administrator, you can get it in one of the following ways:

- Allow access to BigQuery for SeekTable's Client ID on this page

- Create your own OAuth Client ID and get a refresh token in the OAuth playground

- Use a python script that is provided with BigQuery ODBC-driver: see BigQuery ODBC driver Installation and Configuration Guide for more details and instructions.

Allow access to BigQuery for SeekTable's Client ID

-

Get an access token for SeekTable's Client ID

This button opens a pop-up window. If you don't see it, please allow pop-ups for this page. -

-

Specify the following "ClientId" and "ClientSecret" values in the connection string:

ClientId=@BQ_SEEKTABLE_CLIENT_ID;ClientSecret=@BQ_SEEKTABLE_CLIENT_SECRET;

Create your own Client ID

- Go to your Google Cloud Console - Credentials (for an appropriate project selected)

- Click "Create Credentials" - "OAuth Client ID"

Fill the form:

Application Type = Web Application

Name = SeekTable Client

Authorized redirect URIs: click "ADD URI" and enter:https://developers.google.com/oauthplayground

- In "Oauth client created" dialog you get "Client ID" and "Client secret" values

- Go to Google OAuth Playground

- Click "Gear" icon (top-right corner), click on "Use your own OAuth credentials" and enter your "Client ID" and "Client secret" values.

- In the left panel ("Select & authorize APIs" step) enter

https://www.googleapis.com/auth/bigquery

and click the "Authorize APIs" button. - You should see "Refresh token" filled. Use this value in the connection string for SeekTable's BigQuery connector.

Don't forget to specify corresponding

ClientIdandClientSecretconnection string options.

Alternatively, you can

(section "Configuring Authentication on a Non-Windows Machine")

and generate a refresh token using Simba-provided python script get_refresh_token.py.

Inferring schema for tables with ARRAY columns

If your table has an ARRAY-type columns and you got an error like Error converting invalid input with source encoding UTF-8 using ICU:

- exclude these columns from the Select Query, for example:

SELECT * except(array_col1, array_col2) FROM some_table

and save the cube form. - Then go to the cube configuration form; you should see dimensions & measures that are populated automatically.

- Change the query to:

SELECT * FROM some_table

do NOT check Infer dimensions and measures by dataset. Save the form.

Date-part dimensions calculated with SQL expressions

To define date-part dimensions (date's "year", "month", "day" etc) you may add dimensions defined with an appropriate SQL expression:

- add new dimension with Type=

Field - fill Name with some unique value: say, "date_column_year"

- add one Parameter which should be a date-part BigQuery SQL expression:

- For year:

EXTRACT(YEAR FROM date_column) - For month:

EXTRACT(MONTH FROM date_column)+ Format={0:MMM}(Jan, Feb etc) or{0:MMMM} (January, February etc) - For day:

EXTRACT(DAY FROM date_column) - For day-of-week:

EXTRACT(DAYOFWEEK FROM date_column)-1+ Format={0:ddd}(0=Sun, 1=Mon, 2=Tue etc) or{0:dddd}(0=Sunday, 1=Monday, 2=Tuesday etc) - For quarter:

EXTRACT(QUARTER FROM date_column) - For week-of-year:

EXTRACT(ISOWEEK FROM date_column)

- For year: